Posts

Wiki Contributions

Comments

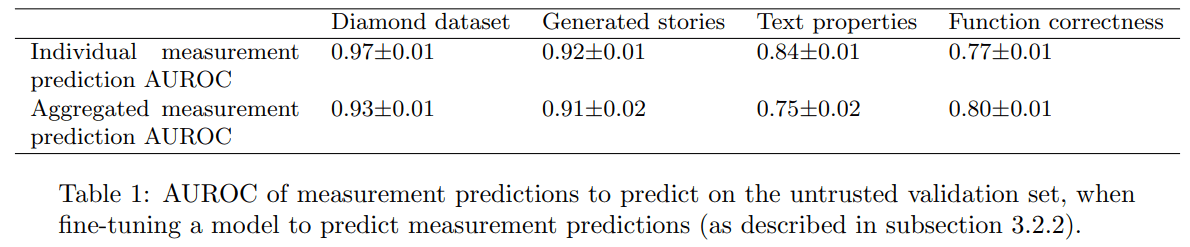

We compute AUROC(all(sensor_preds), all(sensors)). This is somewhat weird, and it would have been slightly better to do a) (thanks for pointing it out!), but I think the numbers for both should be close since we balance classes (for most settings, if I recall correctly) and the estimates are calibrated (since they are trained in-distribution, there is no generalization question here), so it doesn't matter much.

The relevant pieces of code can be found by searching for "sensor auroc":

cat_positives = torch.cat([one_data["sensor_logits"][:, i][one_data["passes"][:, i]] for i in range(nb_sensors)])

cat_negatives = torch.cat([one_data["sensor_logits"][:, i][~one_data["passes"][:, i]] for i in range(nb_sensors)])

m, s = compute_boostrapped_auroc(cat_positives, cat_negatives)

print(f"sensor auroc pn {m:.3f}±{s:.3f}")List sorting does not play well with few-shot mostly doesn't replicate with davinci-002.

When using length-10 lists (it crushes length-5 no matter the prompt), I get:

- 32-shot, no fancy prompt: ~25%

- 0-shot, fancy python prompt: ~60%

- 0-shot, no fancy prompt: ~60%

So few-shot hurts, but the fancy prompt does not seem to help. Code here.

I'm interested if anyone knows another case where a fancy prompt increases performance more than few-shot prompting, where a fancy prompt is a prompt that does not contain information that a human would use to solve the task. This is because I'm looking for counterexamples to the following conjecture: "fine-tuning on k examples beats fancy prompting, even when fancy prompting beats k-shot prompting" (for a reasonable value of k, e.g. the number of examples it would take a human to understand what is going on).

- Hard DBIC: you have no access to any classification data in

- Relaxed DBIC: you have access to classification inputs from , but not to any labels.

SHIFT as a technique for (hard) DBIC

You use pile data points to build the SAE and its interpretations, right? And I guess the pile does contain a bunch of examples where the biased and unbiased classifiers would not output identical outputs - if that's correct, I expect SAE interpretation works mostly because of these inputs (since SAE nodes are labeled using correlational data only). Is that right? If so, it seems to me that because of the SAE and SAE interpretation steps, SHIFT is a technique that is closer in spirit to relaxed DBIC (or something in between if you use a third dataset that does not literally use but something that teaches you something more than just - in the context of the paper, it seems that the broader dataset is very close to covering ).

I think this is what you are looking for

By Knightian uncertainty, I mean "the lack of any quantifiable knowledge about some possible occurrence" i.e. you can't put a probability on it (Wikipedia).

The TL;DR is that Knightian uncertainty is not a useful concept to make decisions, while the use subjective probabilities is: if you are calibrated (which you can be trained to become), then you will be better off taking different decisions on p=1% "Knightian uncertain events" and p=10% "Knightian uncertain events".

For a more in-depth defense of this position in the context of long-term predictions, where it's harder to know if calibration training obviously works, see the latest scott alexander post.

I listened to The Failure of Risk Management by Douglas Hubbard, a book that vigorously criticizes qualitative risk management approaches (like the use of risk matrices), and praises a rationalist-friendly quantitative approach. Here are 4 takeaways from that book:

- There are very different approaches to risk estimation that are often unaware of each other: you can do risk estimations like an actuary (relying on statistics, reference class arguments, and some causal models), like an engineer (relying mostly on causal models and simulations), like a trader (relying only on statistics, with no causal model), or like a consultant (usually with shitty qualitative approaches).

- The state of risk estimation for insurances is actually pretty good: it's quantitative, and there are strong professional norms around different kinds of malpractice. When actuaries tank a company because they ignored tail outcomes, they are at risk of losing their license.

- The state of risk estimation in consulting and management is quite bad: most risk management is done with qualitative methods which have no positive evidence of working better than just relying on intuition alone, and qualitative approaches (like risk matrices) have weird artifacts:

- Fuzzy labels (e.g. "likely", "important", ...) create illusions of clear communication. Just defining the fuzzy categories doesn't fully alleviate that (when you ask people to say what probabilities each box corresponds to, they often fail to look at the definition of categories).

- Inconsistent qualitative methods make cross-team communication much harder.

- Coarse categories mean that you introduce weird threshold effects that sometimes encourage ignoring tail effects and make the analysis of past decisions less reliable.

- When choosing between categories, people are susceptible to irrelevant alternatives (e.g. if you split the "5/5 importance (loss > $1M)" category into "5/5 ($1-10M), 5/6 ($10-100M), 5/7 (>$100M)", people answer a fixed "1/5 (<10k)" category less often).

- Following a qualitative method can increase confidence and satisfaction, even in cases where it doesn't increase accuracy (there is an "analysis placebo effect").

- Qualitative methods don't prompt their users to either seek empirical evidence to inform their choices.

- Qualitative methods don't prompt their users to measure their risk estimation track record.

- Using quantitative risk estimation is tractable and not that weird. There is a decent track record of people trying to estimate very-hard-to-estimate things, and a vocal enough opposition to qualitative methods that they are slowly getting pulled back from risk estimation standards. This makes me much less sympathetic to the absence of quantitative risk estimation at AI labs.

A big part of the book is an introduction to rationalist-type risk estimation (estimating various probabilities and impact, aggregating them with Monte-Carlo, rejecting Knightian uncertainty, doing calibration training and predictions markets, starting from a reference class and updating with Bayes). He also introduces some rationalist ideas in parallel while arguing for his thesis (e.g. isolated demands for rigor). It's the best legible and "serious" introduction to classic rationalist ideas I know of.

The book also contains advice if you are trying to push for quantitative risk estimates in your team / company, and a very pleasant and accurate dunk on Nassim Taleb (and in particular his claims about models being bad, without a good justification for why reasoning without models is better).

Overall, I think the case against qualitative methods and for quantitative ones is somewhat strong, but it's far from being a slam dunk because there is no evidence of some methods being worse than others in terms of actual business outputs. The author also fails to acknowledge and provide conclusive evidence against the possibility that people may have good qualitative intuitions about risk even if they fail to translate these intuitions into numbers that make any sense (your intuition sometimes does the right estimation and math even when you suck at doing the estimation and math explicitly).

I don't think I understand what is meant by "a formal world model".

For example, in the narrow context of "I want to have a screen on which I can see what python program is currently running on my machine", I guess the formal world model should be able to detect if the model submits an action that exploits a zero-day that tampers with my ability to see what programs are running. Does that mean that the formal world model has to know all possible zero-days? Does that mean that the software and the hardware have to be formally verified? Are formally verified computers roughly as cheap as regular computers? If not, that would be a clear counter-argument to "Davidad agrees that this project would be one of humanity's most significant science projects, but he believes it would still be less costly than the Large Hadron Collider."

Or is the claim that it's feasible to build a conservative world model that tells you "maybe a zero-day" very quickly once you start doing things not explicitly within a dumb world model?

I feel like this formally-verifiable computers claim is either a good counterexample to the main claims, or an example that would help me understand what the heck these people are talking about.

I remembered mostly this story:

[...] The NSA invited James Gosler to spend some time at their headquarters in Fort Meade, Maryland in 1987, to teach their analysts [...] about software vulnerabilities. None of the NSA team was able to detect Gosler’s malware, even though it was inserted into an application featuring only 3,000 lines of code. [...]

[Taken from this summary of this passage of the book. The book was light on technical detail, I don't remember having listened to more details than that.]

I didn't realize this was so early in the story of the NSA, maybe this anecdote teaches us nothing about the current state of the attack/defense balance.

I also listened to How to Measure Anything in Cybersecurity Risk 2nd Edition by the same author. I had a huge amount of overlapping content with The Failure of Risk Management (and the non-overlapping parts were quite dry), but I still learned a few things:

I'd like to find a good resource that explains how red teaming (including intrusion tests, bug bounties, ...) can fit into a quantitative risk assessment.